Monitoring Is Still Too Complex and Primitive

When I worked as an SRE in 2014, the tooling was primitive. Even at Google, which was leading the industry. To update an on-call rotation, we had to do things like check out config files from version control, edit them, run Python scripts to generate a rotation, and check the results in to version control. There were a bunch of half-assed tools with terrible usability.

Fast-forward to today, and you have hosted services to to do these, complete with polished UIs and mobile apps. It’s like going from DOS to the cloud.

But while the UI may be polished, the fundamental problems remain unresolved:

By default, you get no alarms, even in obvious cases where your latency went up from 100ms to 10s, which is a 100x increase! Or your error rate went up from 0.1% to 60%. You have to configure every alarm yourself. I’ve seen multi-hour outages go unnoticed for this reason.

To configure an alarm, you need to understand the implementation and reverse-engineer what you want based on implementation details, like the EBS burst balance hitting zero. You have to form a mental model: the database stores its data using a separate block storage service, which gives you a baseline rate of IOPS consumption. If you exceed this limit, you can draw upon a burst balance. If even that is exhausted, your I/Os are throttled, causing latency to shoot up. You should know all these implementation details to set alarms well. This does not make sense. Ideally, you should be able to be told that you need to upgrade your database’s storage speed. If you want to know whether your hard disc is full, you shouldn’t have to understand implementation details such as whether the NTFS Master File Table is running low on available resident attributes.

It’s easy to set up too many alerts. The tool doesn’t use common sense and reduce the frequency of the alerts if you’re ignoring the emails. So you ignore all the emails, resulting in problems going unnoticed.

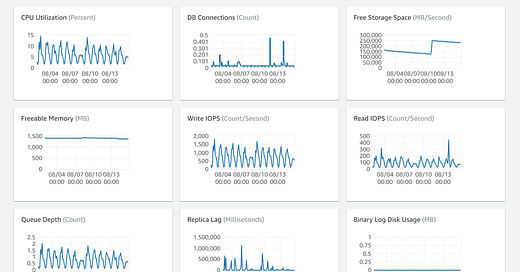

Do you see anything wrong with this database?

The answer is that the Replica Lag exceeded 2 hours, which is a serious problem. But this is not apparent from the above console. Common-sense UX design would suggest highlighting the graph you should pay attention to:

When looking at an individual graph, say for Replica Lag:

unless you already know what an unhealthy value of replica lag is, you’d look at the above graph and not realise you’re looking at a problem. Common-sense UX design would show this like the following infographic:

In this case, the replica was unable to keep up with the primary because the replica was under-provisioned relative to the primary, with only 4 GB memory (as against 32) and 2 vCPUs (as against 8 cores). If you read the 1900-page RDS user guide (!), it says somewhere not to do this. The replica should have the same resources as the primary. But hardly anyone will read a 1900-page guide. This information should be shown contextually in the graph. Information that’s not surfaced contextually might as well not exist.

As another example, when looking at this graph of CPU utilisation

does 100% indicate that all cores are maxed out or only one? Different tools work in different ways, so there should be an info icon you can click to know more. This is another UX principle called progressive disclosure. A variant of this is that I saw a graph where the CPU utilisation was 10%. I thought this was good, but I later understood that this instance type is entitled to only 10% of one core, so it was actually maxed out.

My suggestions above will help you notice problems if you’ve opened the monitoring page for a database. But why would you, in the first place, unless you know that there’s a problem with that particular database? In the list of databases

there’s no indication that the second database is experiencing problems. This should be highlighted:

It seems these companies don’t have UX designers. Or they’re not empowered, being thrown a UI and asked to “make it pretty”. These systems reflect the engineering-centric attitude of just throwing a UI on top of it and considering the job done. Nobody is thinking top-down from the users’ point of view. This is the same kind of defective design that results in rm deleting files permanently rather than putting them in the recycle bin.

Some of these monitoring pages miss critical information. For example, the EC2 monitoring page does not show a graph for free space. Needless to say, this can go unnoticed, causing an outage.

Important but not urgent information is not shown, such as that the database will run out of space in two weeks.

Multiple events are not correlated, such as your overall latency as perceived by users being high because the database’s disk is the bottleneck. You’re spammed by redundant events, one telling you the outcome as perceived by users (black box monitoring) and another telling you what’s going on within your system (white box monitoring), leaving you to manually connect them.

When there’s an outage, the tools don’t direct your attention, like telling you that everything is fine with your database, and your VMs are facing problems, so look at them.

Metrics should be measured over a long enough time interval to avoid noise, and the tools don’t nudge you towards such best practices.

There are too many monitoring tools — some are first-party, like Google Cloud Monitoring and AWS CloudWatch.

Some are third-party SaaS tools like PagerDuty, Squadcast, Splunk, Blameless, Fire Hydrant, Kintaba, OpsGenie, Zenduty and more.

Some there are open-source tools like Prometheus that you need to host yourself.

Finding the right tool among this huge set of similar-looking tools is a task in itself. Many of them are copies of each other, like Gmail vs Outlook.com Mail, just with a different UX rather than any fundamental difference. If you’re considering starting a startup in this area, don’t — it will be hard to stand out from the crowd of all these websites saying basically the same thing. I’m not seeing much fundamental innovation.

Some of the monitoring products are extremely sophisticated, and have a steep learning curve. One of them took me a month to learn. They’re like Photoshop, which is powerful enough for any kind of photo editing, but unless you’re an extremely advanced user, there are simpler, easier to use tools for most of us.

Some tools can’t generate alerts themselves, only receive them and process them, such as on-call schedules, assignments, escalations, retrospectives, and so on. I would not prefer using these, because they are yet another tool to learn and configure and integrate.