How To Build Servers That Don't Crash When Overloaded

A server has a certain capacity, say, 400 requests / sec. What happens if it’s overloaded to 600 rps? Ideally, it should process 400 rps successfully, while erroring out the remaining 200.

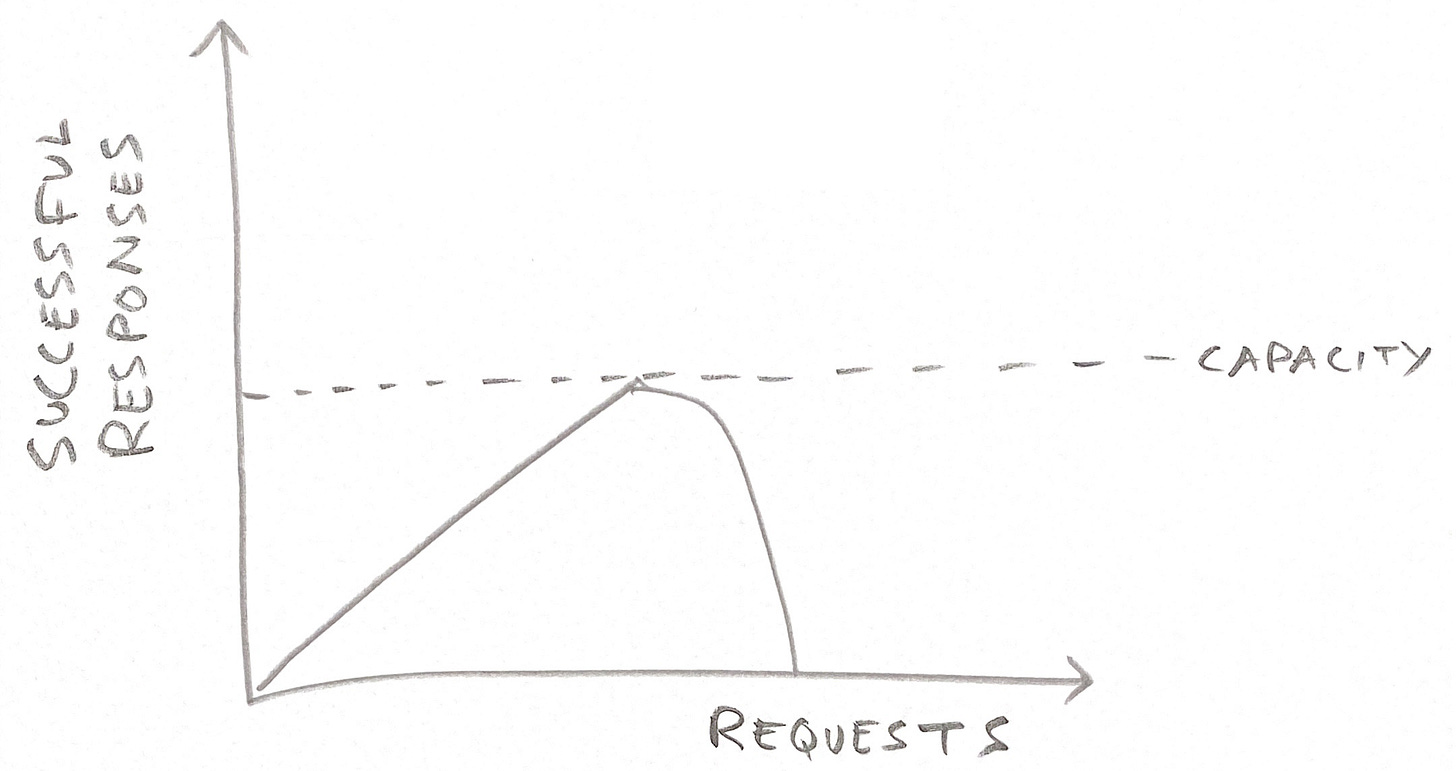

Unfortunately, overloaded servers often generate fewer successful responses, such as 200 successful responses and 400 failures, as this infographic shows:

This is obviously bad for system stability. It can lead to production incidents or even outages.

You want this behavior:

How can we architect servers to behave this way?

First, backpressure: Overloaded servers should stop accepting more requests. Just stop reading from the TCP connection to the load balancer. This causes TCP to eventually prevent sending more requests to the overloaded server. This is no different from a pipe: if you close a tap on the receiving side, the pressure increases, which propagates back up the pipe to the sending side, causing it to stop sending more water. If you didn’t have this, the water would still flow down, smashing into the tap and destroying it 1.

How do servers recognise they’re overloaded? The two critical parameters are memory and CPU. As for memory, before accepting a request, the server can check how much free memory it has, and if it’s below a configurable threshold, stop accepting more requests. If it did accept more requests, it will run out of memory and crash 2, and we don’t want that.

As for CPU, the thread3 that accepts new connections should run with the least priority, lower even than garbage collection and other threads that we think of as background threads but that are still critical for continued operation of the server. This ensures that as the CPU gets busy, it naturally adds a delay in accepting new requests, applying backpressure. As the CPU utilisation increases, this delay naturally increases, increasing the backpressure. If the CPU is 100% utilised for 10 seconds, it won’t accept any requests for that time period, thus applying a lot of backpressure.

Backpressure should apply at all layers — load balancers, app servers, database, etc 4.

Second, overloaded servers sometimes get into a situation where requests are being partially processed and then timing out 5. This is a problem because the resources spent processing that request are wasted. They could instead have been used to process another request and prevent it timing out. Imagine a restaurant that’s so slow that by the time they deliver a dish to a table, those people have walked out. If the restaurant had instead prioritised cooking for a different table, they might be able to serve them in time before they walk out, too. To prevent this situation, servers should prioritise partially processed requests over new ones. Backpressure does this, but there could be other ways. If a request has four steps in its processing, steps two, three and four could run in a maximum priority thread, and step one in the least priority thread.

Third, servers sometimes have queues at multiple layers. For example, ELB has a surge queue to hold requests that the backends can’t handle. Queues can create the same problem: a request might sit in the queue so long that when the server starts processing it, it doesn’t have enough time to complete it before it times out. I wonder if a stack is a better data structure than a queue: it priorities newer requests, which have a less chance of timing out.

Maybe you have a better idea to architect servers that don’t crash when overloaded. If so, share it in the comments. Whatever the specific ideas, servers should be architected to not crash when overloaded.

Or the pipe.

Garbage-collected languages like Java enter a GC death spiral where all the CPU is spent garbage-collecting. This happens because garbage collection algorithms trade off between CPU usage and memory usage: if you have little free memory, the CPU usage shoots up.

Or the system could begin paging, if you mis-configured your system to enable paging.

Whichever way you look at it, running out of memory is bad.

How do do you test that the server applies backpressure when it runs out of memory? Choose an instance type with the highest possible ratio of CPU to memory, and send it a lot of traffic. It should eventually run low on memory, at which point you can see if it’s crashing or otherwise misbehaving. The high CPU ensures that the CPU is not the bottleneck. If that’s not enough to reproduce a low memory situation, decrease the heap size, such as by reducing -Xmx in a Java program.

In a single-threaded server like Node, that callback can still have the least priority as compared to other callbacks that are waiting to be run. If V8 doesn’t support that, it should be extended to.

Any critical resource should be taken into account to apply backpressure. For example, if a server uses temporary files to process requests, but the server is low on disk space, apply backpressure. Or if it’s using a task queue that has a fixed capacity, take that into account. When a server chooses not to apply backpressure, it’s guaranteeing that it will process the next request, and it shouldn’t make that guarantee if it can’t honor it.

This includes a human timeout, where the user has given up and moved on to another site or app even if it technically hasn’t timed out.