RDS DevOps Guide

Use managed services like RDS to reduce overhead compared to running your own database.

Pick one database, database version and configuration and standardise it across all your instances.

Pick the version that has the longest support window. If there are multiple, pick the latest. For Postgres, that’s March 2025:

If the version you’re on till reach end of life sooner, upgrade. If you’re using 13.8, you could update to any of the rows marked in purple. Among them, pick the latest, which is 16.1

RDS databases have parameter groups (to enable database features) and option groups (to configure the database). Use the standard ones RDS gives you, as much as possible.

Databases live inside a VPC and are therefore protected by the VPC’s security group. Only authorised traffic can enter the VPC, let alone touch the database.

Enable storage autoscaling — otherwise, you can run out of space and have an outage. Set the autoscaling limit to 10x your current storage, or a year’s growth.

When setting up a database, choose 20 GB storage size, unless you know any better, since 20 GB is the minimum RDS allows, and you can’t downsize later.

Upgrading the major version of your database can cause an outage, so test it on staging first.

RDS can automatically apply minor version updates. Turn this on.

Enable Performance Insights to understand which SQL queries are imposing the most load.

If your database is unable to keep up, identify what the bottleneck is — memory, SSD speed, CPU — and upgrade that. Don’t blindly upgrade to a bigger instance type. In fact, database servers are typically bottlenecked on memory first and SSD IOPS second, since their job is to move large quantities of data back and forth. CPU is typically not the bottleneck. So you’ll get better performance with an instance class that has proportionally more memory for the same CPU.

RDS automatically backs up the database periodically, and retains them for a specified duration. Make sure this is not zero, because that means not having any backups. Set it to the maximum allowed 35 days.

RDS lets you configure a maintenance window, during which the database may be unavailable for a minute. Configure this to off-peak hours. RDS also has a backup window during which performance may be slightly affected. Configure this to abut the maintenance window.

Host your app servers in the same availability zone as your database, for lower latency and lower cost (inter-AZ traffic is charged). And to increase availability, because hosting the app servers and database in two zones means that either zone failure takes down your service.

Enable deletion protection to prevent someone from accidentally deleting your database.

Be careful with your database passwords — choose secure ones, share them with everyone who will be on-call, but with nobody else.

You can take a snapshot of a database before doing anything risky.

In addition to manual snapshots, RDS has automated backups. These are more flexible, in that they let you restore to any point in time within a certain window, rather than only one point as with snapshots.

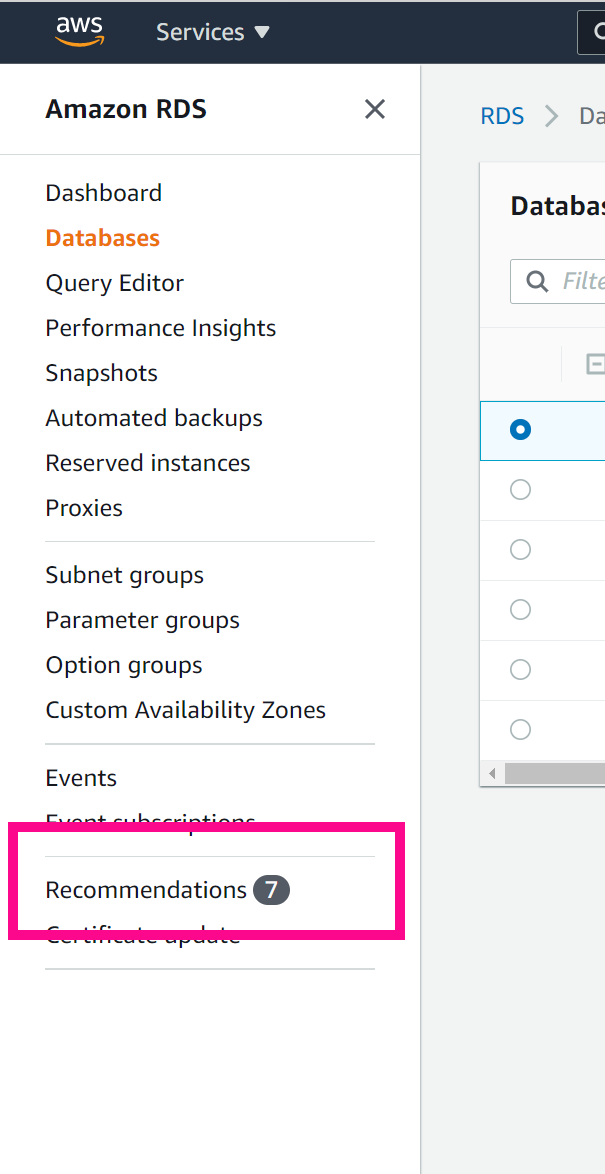

Go through RDS recommendations:

Look at the Maintenance column. If maintenance is due, some will be automatically applied by RDS, but some won’t be. The latter are marked “Available”. So you should manually apply such pending maintenance:

Use Multi AZ, because it’s less likely to lose data, and have a higher uptime according to the SLA1.

Don’t use a read replica2.

Choosing an instance type

Don’t use a micro instance, which isn’t covered by the SLA. If you think it’s just legalese, Amazon is telling us that it may not work properly. Choose small, which has 2 GB memory, or bigger.

Use the latest generation, since earlier generations have a worse price/performance ratio. Sometimes, they’re costlier and slower. Sometimes, they’re marginally cheaper, but perform worse, which again isn’t worth it.

Don’t use a burstable instance3.

Alarms

Set alarms to fire if:

… the minimum CPUCreditBalance over 1 minute reaches 0 (if applicable).

… the minimum EBSByteBalance% over 1 minute reaches 0.

… the minimum EBSIOBalance% over 1 minute reaches 0. What to do if this alarm fires: increase storage by 10%4.

… the minimum BurstBalance over 1 minute reaches zero5.

… the minimum FreeableMemory over 1 minute falls below 5%. What to do if this alarm fires: upgrade to an instance that has more memory and at least as much CPU as your current instance.

… the maximum number of connections over 1 minute is same as the limit on the number of connections (specified in the parameter group) is reached.

… the average read latency6 over an hour exceeds 1 ms.

… the average write latency over an hour exceeds 1ms.

… the minimum ReplicaLag over an hour >= 1 minute. This applies only for a read replica. What to do if this alarm fires: Check if the read replica is a smaller instance type. RDS recommends at least the same resources on the read replica as the primary. Identify the bottleneck and increase that resource.

… the average CPUUtilization over an hour > 80%. What to do if this alarm fires: Upgrade to an instance that has more CPU and at least as much memory as your current instance type.

Transaction commit latency does increase by a few ms, but it’s worth it.

There’s also Multi AZ “with two standbys” (which Amazon also refers to as “Multi-AZ cluster”). I wouldn’t recommend this because it has a whole bunch of limitations:

Multi-AZ DB clusters support only Provisioned IOPS storage.

You can't change a Single-AZ DB instance deployment or Multi-AZ DB instance deployment into a Multi-AZ DB cluster, or vice-versa.

Multi-AZ DB clusters don't support the following features:

Exporting Multi-AZ DB cluster snapshot data to an Amazon S3 bucket

Point-in-time-recovery (PITR) for deleted clusters

Restoring a Multi-AZ DB cluster snapshot from an Amazon S3 bucket

Storage autoscaling by setting the maximum allocated storage

Copying a snapshot of a Multi-AZ DB cluster

RDS for PostgreSQL Multi-AZ DB clusters don't support the following PostgreSQL extensions: `aws_s3` and `pg_transport`.

… unless you know what you’re signing up for:

Reading from a read replica can return stale data. Imagine you update your Twitter profile (write) and when you save, it reverts back to its old state. This would seem broken, right? This can happen if the read for the profile after editing was served from a stale read replica.

A poorly run read replica can affect the main instance. You need to monitor the ReplicaLag.

A read replica increases DevOps work: you need to choose a database version and instance type, configure it, set up alarms to monitor it, manually apply maintenance and DB upgrades…

Why?

Monitoring becomes harder: Say you’ve set an alarm to fire if the average CPU utilisation over 1 hour > 60%. It doesn’t fire. You check the graph and it shows that the CPU utilisation is constant at 10%. Does that mean that CPU is not a problem? Not for burstable instances, where you might be entitled to only 10% CPU sustained. You’re out of CPU and it doesn’t look like it.

Billing: Burstable instances can incur extra charges depending on how much CPU you use, as compared to non-burstable ones, where the price is known up front. It can even cost more than a non-burstable instance. Would you rather get a predictable bill of $200/month or an unpredictable bill between 100-300/month?

If you (or someone in your team) doesn’t understand all the above, that’s exactly the point — stay away from burstable instances, and you won’t need to.

Why only 10%? Because if you want to increase it more, you might end up discussing with people, thinking about whether the proposed increase is overkill, etc. You want to act quickly without getting caught in analysis paralysis.

There can be two separate burst quotas — one for the EBS volume, and for the instance itself. Exceeding either quota causes throttling. These two metrics EBSIOBalance% and EBSByteBalance% are for the instance. In other words, even if you connect an EBS volume that has sustain an extremely high IOPS without throttling, the instance itself throttles. Think of it as connecting a superfast SSD to an old computer — the computer itself is the bottleneck.

Why is this important? Because latency increases dramatically. In one case, it went from 100 ms to 10s, as measured at the load balancer.

This burst quota is for the EBS volume itself.

This is the latency the database experiences from the underlying EBS volume, not the latency of the database itself.